A practical look at running CKEditor AI on your own infrastructure — wiring the editor to a self-hosted LLM and registering MCP tools so it can act on your data without ever leaving your premises.

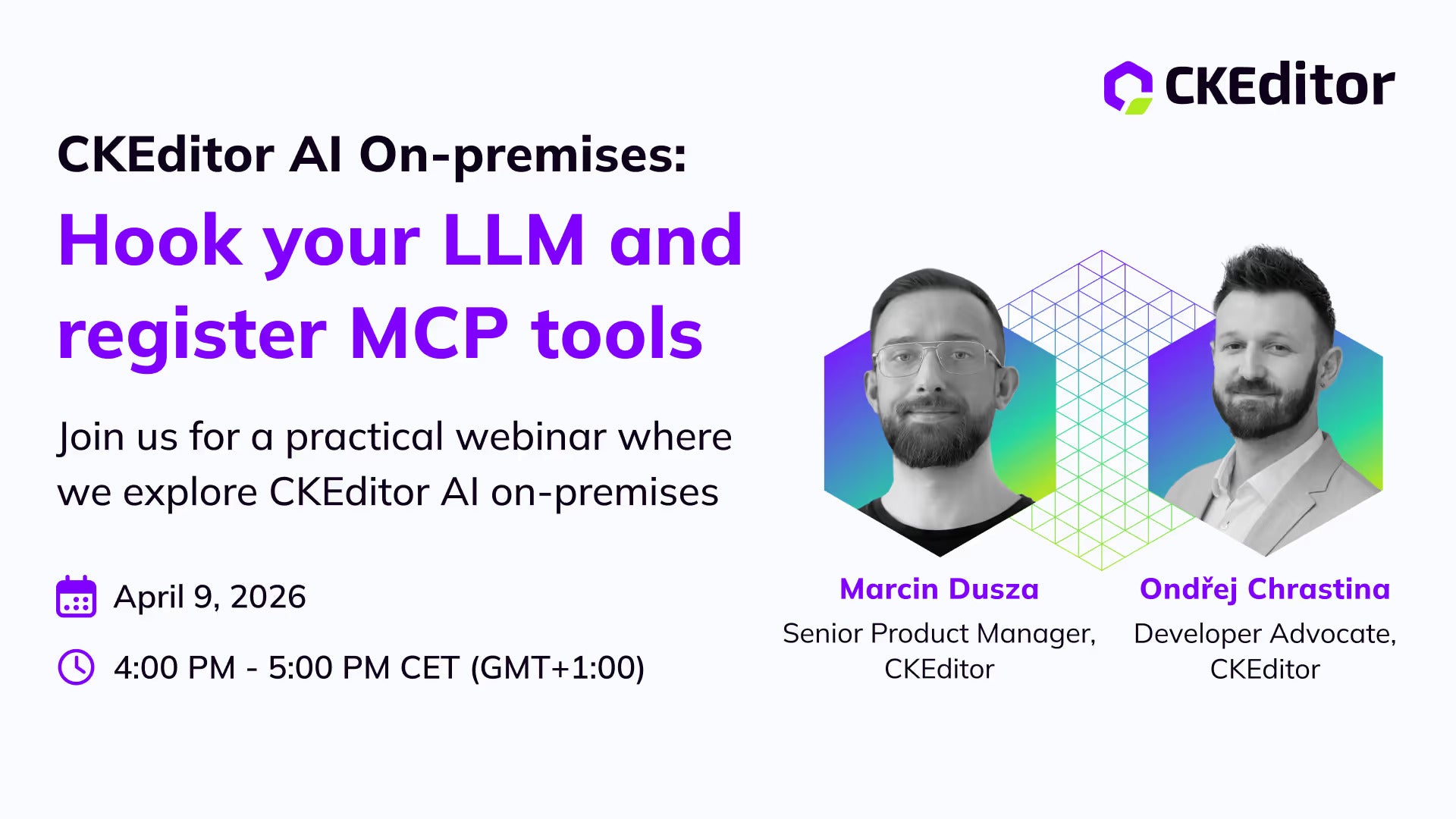

Released: Thu Apr 09 2026

What the session covered

If you've ever shipped an enterprise feature only to hit a wall labelled "data residency," you know the pain. Your users want a co-writer that lives in the editor — your security team wants the model to live next to the database. This session walked through how to give them both.

The moving parts

- Hooking CKEditor AI to your own LLM endpoint — local, self-hosted, or behind your VPC.

- Registering MCP tools so the editor can pull live context (search, lookup, retrieval) without leaking content to a third-party model.

- Prompt routing, tool registration, and where authentication slots in.

Who it was for

Engineering leads, AI architects, and platform teams who need rich-text AI features but can't ship anything that talks to a public model. There's always one constraint that breaks the obvious approach — on-premises is usually it.